Support for other data sinks besides Kafka. See this blog post by BlaBlaCar for a discussion and their solution around it. It takes time for an existing connector to shut down and a new instance to come up - which might be acceptable for a few use-cases but unacceptable in others. Debezium relies on the Kafka Connect framework to provide high availability but it does not provide something similar to a hot standby instance. Although multiple replication slots on a single database do add overhead, we are able to run fine with around 6 to 10 slots per database host without any noticeable performance impact. Moving to a single connector per team setup also eased a lot of management overhead regarding configuration changes since we no longer need to co-ordinate between multiple teams when creating a release plan for any changes. We are trying to identify possible issues in this configuration but haven’t found any yet. Any team performing bulk data updates or deletions or unplanned schema migrations will only impact their own Debezium connector instead of the entire PostgreSQL instance since Debezium filters events at its end according to the database and/or schema whitelist and blacklist configured. The main reasons for not running a single connector per PostgreSQL instance were regarding workload isolation. We initially used a single Debezium connector per PostgreSQL database (instead of per host) but then moved to using a single connector for each team. We are still not sure of the cause but initial investigations point to the issue happening only on instances that have PgBouncer attached (even if not connecting through PgBouncer) or instances with smaller sizes (AWS t2/t3 series). We observed frequent EOF errors on the database connection on a few RDS instance sizes. This was fixed by changing to columns_diff_exclude_unchanged_toast and has since been documented. After debugging we were able to confirm the reason as frequent schema refresh by Debezium due to TOASTed columns not being present in the replication message. We got severely reduced throughput on tables with JSONB columns. Since this is a common use-case that comes up when using Debezium with PostgreSQL, an issue has been created to track this at DBZ-1815. Heartbeats are needed to control the WAL disk space consumption in the following cases:

We had not enabled heartbeats in Debezium.

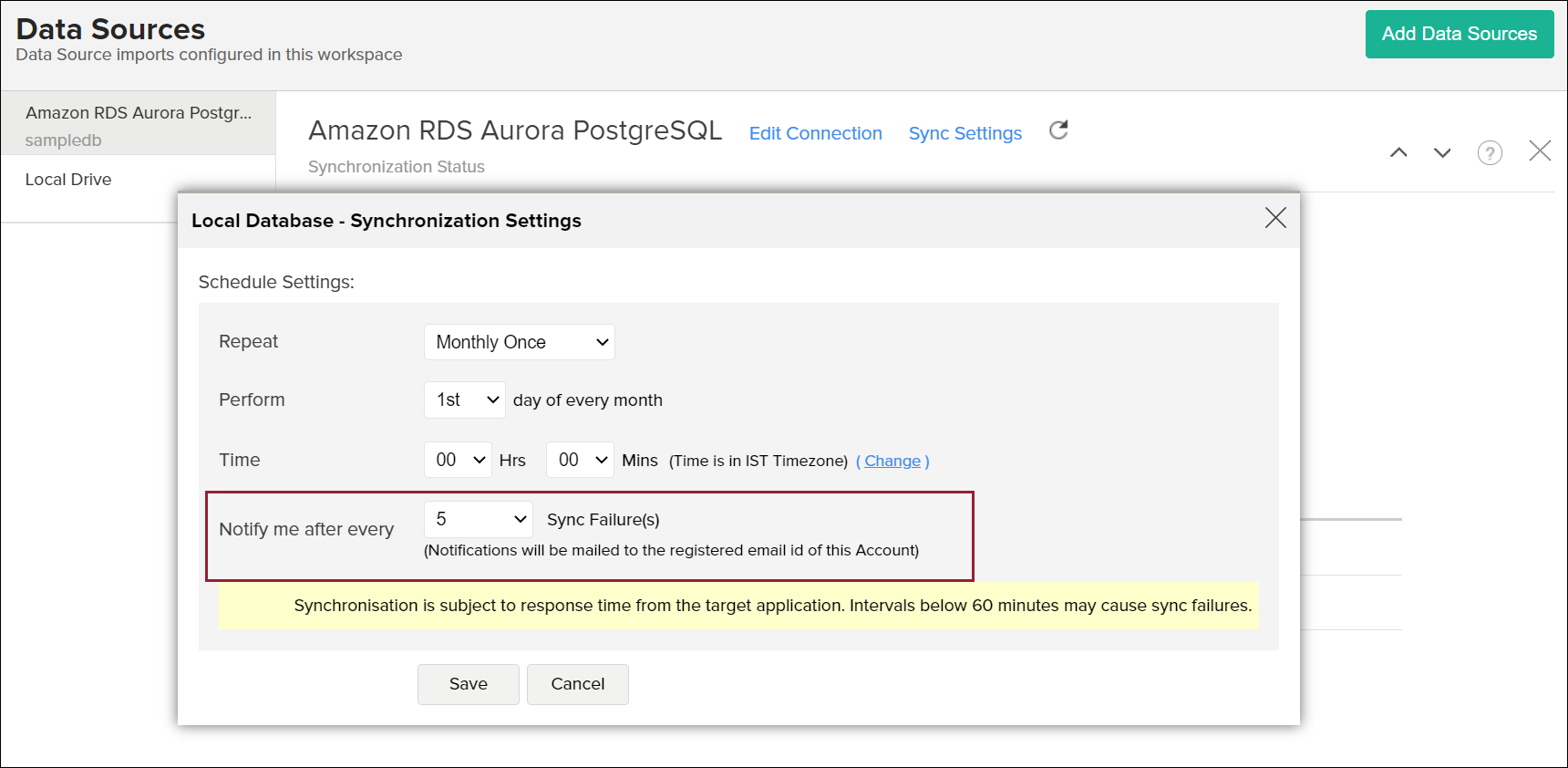

We added alarms on the RDS metric TransactionLogsDiskUsage and OldestReplicationSlotLag to alert us when the transaction logs disk usage increased above a threshold or when a replication slot started lagging - meaning that Debezium might have died. No alarms were set up on the transaction logs disk usage on the database. "3.14") and set to json to receive HSTORE columns as a JSON string.Īlways ensure basic hygiene checks like database disk usage, transaction logs disk usage and network and disk bandwidth being used for read and write operations. Specifically, set to string to receive NUMERIC, DECIMAL and equivalent types as a string (eg. To convert to a format that’s easier to parse at the expense of some accuracy loss, we configured the data type specific properties as documented. As documented, that’s the default for NUMERIC columns, but can be difficult to handle for consumers. We were observing a few data-types with base64 encoded data. The only downside is that we now need to maintain the schema of those messages in an external schema registry. Hence we decided to disable message schemas by setting and to false to reduce the size of each payload considerably hence saving on network bandwidth and serialization/deserialization costs. We were producing JSON messages with schemas enabled this creates larger Kafka records than needed, in particular if schema changes are rare. On PostgreSQL = 10, use the pgoutput plugin.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed